Vision-based End-to-end Control for Racing

This project investigates the feasibility of end-to-end vision-based control for high-speed autonomous racing. We develop a CNN-based feedback policy that maps onboard RGB images and wheel encoder feedback directly to steering and throttle commands, without relying on explicit mapping, localization, or global state estimation.

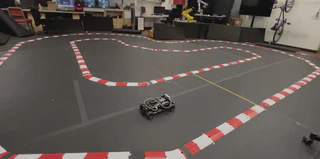

The policy is trained via imitation learning from an oracle Model Predictive Contouring Control (MPCC) expert with full-state access. Despite the significant sensing mismatch between the expert and the learned policy, the resulting controller demonstrates stable and aggressive driving behavior near the vehicle’s dynamic handling limits. The system is deployed on a 1:10 scale autonomous RC car equipped with a Jetson platform and a fully onboard ROS stack.

Extensive evaluation is conducted both in simulation (CARLA) and on hardware. In real-world experiments, the learned policy achieves sustained high-speed rollouts—up to 80 consecutive laps—without constraint violations, and exhibits improved consistency compared to traditional SLAM-based pipelines. These results highlight the potential of imitation learning as a practical pathway toward robust vision-based control for autonomous driving in dynamic regimes.

Note: You may notice occasional boundary contact and slight trajectory oscillations toward the end of the run. These behaviors highlight limitations of pure imitation learning under distribution shift and directly motivated my subsequent work on safety-aware learning and constraint-aware policy optimization.

Principal Researcher (Nov 2023 — May 2024)

- Developed a CNN-based end-to-end controller for high-speed autonomous racing using RGB camera input and velocity feedback.

- Trained using imitation learning from MPCC expert trajectories and deployed on a 1:10 Jetson-powered vehicle with onboard ROS stack.

- Designed and executed systematic policy evaluation in CARLA under various conditions (e.g., weather, lighting), measuring success rates and failure modes across diverse initial conditions to assess robustness near dynamic handling limits.

- Achieved long rollouts (80 laps at high speed) without constraint violation and improved consistency in performance compared to traditional SLAM-based pipelines across 10+ field tests.

Tech: PyTorch, CasADi, ROS, OpenCV, SLAM, RL, NVIDIA Jetson, real-time control.